Extended Tactile Perception

Vibration Sensing through Tools and Grasped Object

Humans display the remarkable ability to sense the world through tools and other held objects. For example, we are able to pinpoint impact locations on a held rod and tell apart different textures using a rigid probe.

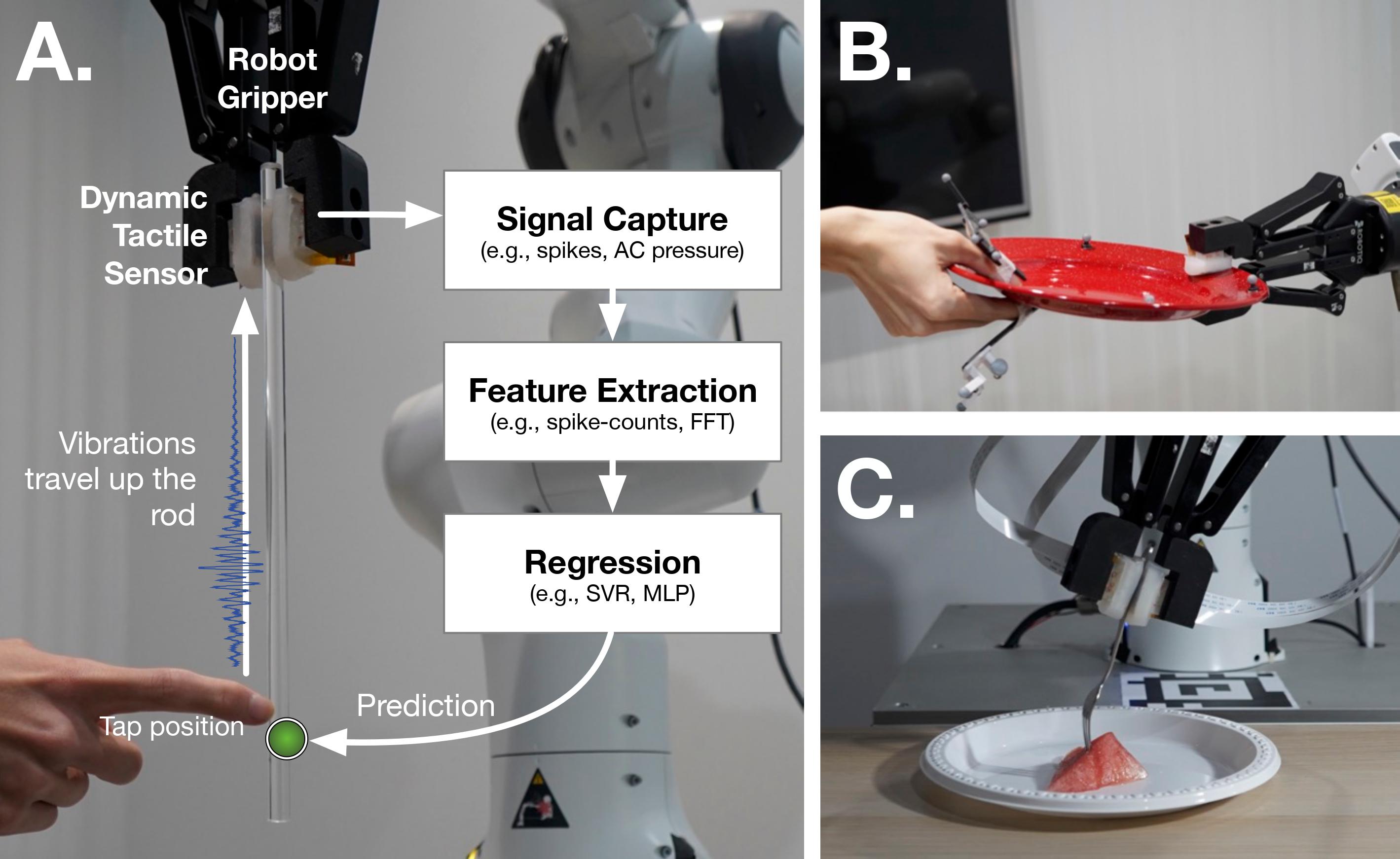

In this work, weconsider how we can enable robots to have a similar capacity, i.e., to embody tools and extend perception using standard grasped objects. We propose that vibro-tactile sensing using dynamic tactile sensors on the robot fingers, along with machine learning models, enables robots to decipher contact information that is transmitted as vibrations along rigid objects.

We demonstrate that fine localization on a held rod is possible using our approach. Next, we show that vibro-tactile perception can lead to reasonable grasp stability prediction during object handover, and accurate food identification using a standard fork.

For more on this work, please look at the following resources:

- Arxiv paper with latest update

- Code and Data

- Slides

If you build upon our results and ideas, please use this citation.

@inproceedings{taunyazov2021extended,

title = {Extended Tactile Perception: Vibration Sensing through Tools and Grasped Objects},

author = {Taunyazov, Tasbolat and Song, Luar Shui and Lim, Eugene and See, Hian Hian and Lee, David and Tee, Benjamin CK and Soh, Harold},

booktitle = {IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)},

pages = {1755--1762},

year = {2021},

organization = {IEEE},

}